AI and the in-between

A new personal chapter

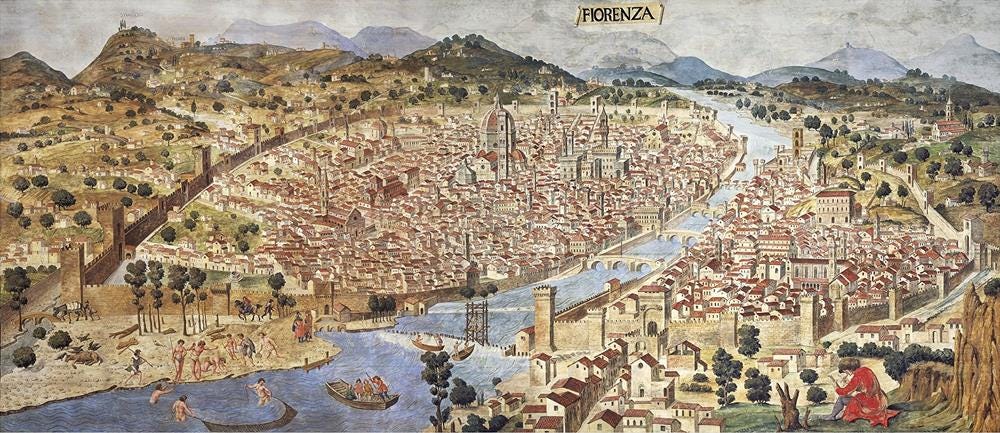

Of all the thought pieces and tweets on AI of late, my favourite has been a Dwarkesh interview with Renaissance historian and UChicago professor, Ada Palmer. If you’re a fellow history nerd you will recognise many of the usual themes covered, such as the transition from villas to villages, the city of Florence cosplaying Rome, the Gutenberg press and the slow grind towards science. What Palmer does particularly well is frame these events through the systems beneath them: ideas, institutions and the information networks that drove broader societal and technological change. It’s a striking example of how a short walk through the past can significantly rearrange how we see the present.

What stood out in particular was that beneath all the innovation and discovery, transformative technologies don’t change the world on arrival. They require entire ecosystems to catch up. Infrastructure, distribution, norms, and ways of thinking – these things can take decades to form. And often the biggest shifts don’t come from the tools themselves but from how people learn to use them differently. The Renaissance wasn’t a neatly packaged moment of innovation, it was a long, messy process of trial, failure, reinterpretation, and eventually, new ways of reasoning about the world.

It’s hard not to see the parallels with AI right now. We’re clearly in a phase where capability is ahead of application: models are improving rapidly, but the surrounding systems, workflows and org structures are still catching up. The technology is ahead of the systems around it. And those new systems will define our future, in both a macro sense and at a very individualistic level of purpose and identity.

Which is why I’ve decided to take a short sabbatical. It’s something I’ve long wanted to try out and couldn’t think of a more opportune time to do so than right now. After just hitting the two-year mark at Lumina it was no easy decision to leave, but the team is in good hands and I remain in orbit in an advisory capacity. Looking ahead, here are a few key areas of interest I’m pursuing:

Building: getting close to the tools themselves. AI coding, agents, new workflows. To understand how building actually feels when execution is partially automated, and where the real constraints start to shift.

Teams that build: how design, engineering and product look to collapse into a more unified workflow. What happens to roles, handoffs and team structure when individuals can operate across the stack, and coordination becomes the primary challenge.

How companies keep up: Beyond my own field, how do companies of different industries and sizes adapt to these shifts. Particularly in how they structure teams, make decisions, and evolve their culture and tooling to support a new way of operating.

As someone who’s never really identified with a fixed role, this feels like a moment of expanded agency more than anything else. I feel fortunate to have a dedicated window of time to explore and create. Also sharing more of my learnings and projects more transparently here soon.

If there’s one other thread from Palmer and Dwarkesh’s conversation that stuck with me, it’s that none of this happens in isolation. If you’re exploring this space in any capacity—or even just trying to make sense of it from a completely different industry—I’d love to hear from you.

Field notes:

Buccocapital’s Where Are You in the Context Supply Chain? is a stand-out read on why AI’s real disruption isn’t exactly jobs, but the coordination layer that exists between the people who build things and those the people who sell them. The more interesting argument is about incumbents: you can’t drop AI into an org chart built around context-carrying and expect it to restructure itself.

”The goal is to become a token orchestrator before you become a token orchestrated by someone else.”

Box’s founder Aaron Levie’s latest field notes from a week with enterprise AI and IT leaders across banking, retail and healthcare is a useful reality check on where actual adoption stands. Steven Sinofsky’s response is a deeper technical read on why ‘just give us an API’ is a lot harder than it sounds.

”Most companies are not talking about replacing jobs due to agents. The major use-cases for agents are things that the company wasn’t able to do before or couldn’t prioritise.”

Your Harness, Your Memory — Harrison Chase’s piece is a useful primer on how agent memory actually works under the hood, and why the harness managing it matters more than the model itself. I’ve learned a lot about this firsthand setting up my own personal OS agent. On a strategic level, Gupta’s accompanying piece, Anthropic sees the moat. Do you?, explores whether the next great moat in enterprise AI may not be intelligence alone, but trust — permission to act inside systems directly in workflows creates a depth of integration that makes switching not just inconvenient, but genuinely costly. Memory is how that trust compounds over time.

If there's a leading blueprint for what good AI adoption actually looks like in practice, Ramp's Seb Goddijn write-up on Glass is it. They hit 99% AI tool adoption company-wide, then realised individual wins weren't translating into organisational capability — people were getting value in isolation but not sharing it. So they built Glass: an internal AI productivity suite with a shared skills marketplace.

”The single most important thing we learned: the people who got the most value weren’t the ones who attended our training sessions. They were the ones who installed a skill on day one and immediately got a result. The product taught them faster than we ever could.”

Linear’s Issue tracking is dead felt like a masterclass in getting ahead of a tidal shift. Long seen as a sleeker Jira, they’ve pulled off a genuine pivot here in ensuring a clean differentiation and narrative around their agentic focus. CEO Karri Saarinen laid out a lot of this thinking earlier in the year with The Dissapearing Middle of Software Work. They’re now bringing receipts.

Andrej Karpathy shares his thoughts on AI’s potential to help people increase the visibility, legibility and accountability of their governments. In a similar vein, Jennifer Pahlka writes on why philanthropy should fund AI in government, in order to help rebuild broken services and for agencies to own the tools to better control their own future.

Dwarkesh Patel’s interview with Ada Palmer

If you enjoyed this from a historical lens, a great follow-up is Barbara W. Tuchman’s A Distant Mirror.

You should write more, Dave. I feel smarter whenever I finish reading your posts! 😂